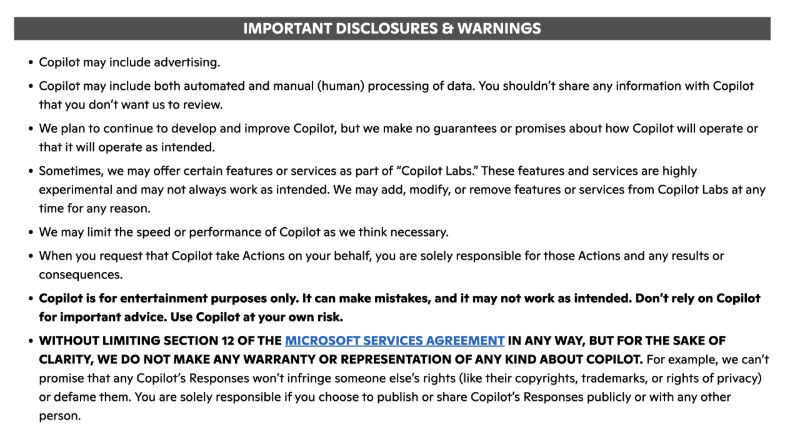

This week, we discovered that Microsoft's AI Copilot tool is described as 'for entertainment purposes only' within its own terms and conditions. This small detail hadn't come into the mainstream for a while, as Microsoft last (quietly) updated Copilot's terms of use on October 24, 2025. It's only now that the disclaimer is making the rounds on social media, and people aren't impressed. As you can see from the screenshot of the terms below, it reads: “Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

Given that Microsoft markets this 'powerful' tool towards serious business use, how seriously should we take this small print? Does this kind of language suggest that Microsoft doesn't trust its own products? If so, then why should we when we're using them? It's familiar to the type of language used by psychics, tarot readers and fortune tellers – who use exact phrasing to prevent legal repercussions. A quick Google of local psychics in the UK will bring up website terms and conditions with phrasing like 'All psychic services advertised on this site are strictly for entertainment only', which is required by law.

This may also be a way of Microsoft protecting itself from more legal woes, especially after ChatGPT (which is owned by OpenAI, Microsoft's partner), found itself in hot water back in 2023 after scraping information not only from the internet, but also non-fiction books, with authors seeking damages in lawsuits.

Microsoft itself has described the questionable terms and conditions as 'legacy language' from 'when Copilot originally launched as a search companion service in Bing'. It claims it will be changed within their next terms and conditions update.

AI tools and agents have been hailed as one of the most important technological progressions since the industrial revolution. But when a company doesn't stand by the accuracy of a product or its intended purpose or design, it can be worrying and damaging for a brand's reputation. It's a pattern we're seeing more of as AI companies make grand claims about the abilities of their tools, yet simultaneously evade any responsibility when it comes to factual mistakes, inaccuracies or 'hallucinations', so that they're covered for any legal liabilities.

There's a growing gap between what AI tools and companies promise, and what they actually deliver in sobering reality. In the meantime, we watch and wait to see how things play out, especially when companies like xAI, Anthropic and OpenAI are due for record-smashing IPOs.