By Emily Haddington

As a professional copywriter, I take pride in saying that everything I write comes from my own brain as a result of research. I haven't just put in a lazy prompt and lifted and shifted large swathes of slop from a large language model, passing it off as my own. Given how much copy I see day to day in the marketing world, I also like to think I'm pretty good at spotting a ChatGPT output from a mile away. The quick 'tells' are the super long dashes, two-word sentences in succession, vague summarising and over-use of phrases like 'align', 'leverage' and 'elevate' (who even used those words before AI came along?!).

Over the last six months however, I've noticed that the lines are becoming increasingly blurred. People are failing to see the difference between AI and human copywriting, to the point where they're starting to accuse others of using AI when they haven't. Marketing and copywriting professionals on social media (especially Linkedin), are finding that more people are quick to accuse them of using AI to write their posts, even though the words are of their own making, from their own mind.

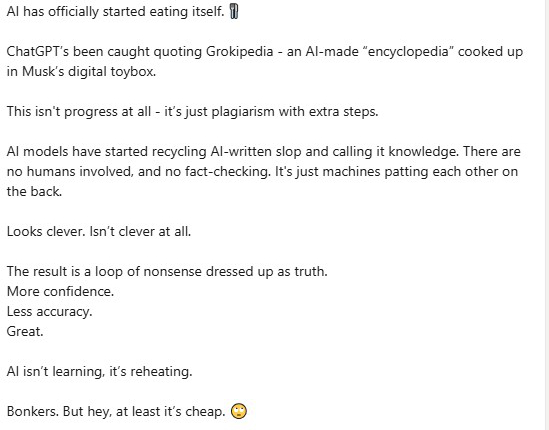

Take the following post for example that I found on Linkedin:

Beneath this post, I was struck at the sheer volume of comments from people accusing this person of using AI to compose this message. (I'll then admit, I spent some time second-guessing before coming to the conclusion that AI probably didn't write this). It's likely the writer had chosen to structure the piece using short sentences because they wanted it to read with more impact.

It certainly got traction, but it raises a very important issue. We're now at the stage where genuine human content is being treated with suspicion. With language models replicating different styles (or attempting to) – are we now saying that writing in shorter sentences puts us at risk of being called a bot? Do we have to continually change and evolve our natural writing voices as copywriters to 'prove' in some way that we are actually human?

The rise of AI copy detectors

There are many types of AI detector software out there, which some companies and academic institutions use to determine whether or not something has been written by a human. However, these softwares have a habit of creating false positives, leading to accusations of misconduct (in the case of students), or cutting corners (in the case of copywriting professionals). In 2023, OpenAI, the company behind ChatGPT, actually closed down its own AI detection software because of consistently inaccurate results.

AI copy detectors have a tendency to flag structured and formal writing as AI copy, regardless of whether it's authentically produced by a human writer. Contrastingly, copy originally created by AI and then heavily edited, changed or paraphrased by humans often passes through as a completely human creation. Within an academic setting, AI detectors also misidentify the writing styles of people who speak English as a second language, or those who are neurodiverse. Their writing is sometimes classified as AI-generated, too.

It's important to remember that AI detectors cannot understand language, so when they 'read' copy, they're not actually reading and assessing anything. They're just checking patterns based on what they've been trained to recognise. New AI models are also frequently updated, making it even more difficult for AI detectors to keep up with their pattern recognition training.

The problem with 'proving' something is real copy

I once saw a post on Linkedin from a professional copywriter who claimed that their client had run their copy through an AI detector twice over, using its results as 'proof' to avoid paying an invoice for genuine human work. The writer delivered the copy first-time around, and it was rejected by the client's AI detector. The client asked for it to be re-written without AI. To keep the client sweet, the writer offered to do a re-write for free, despite the fact that the copy was completely from their own brain. Second time around, the copy was rejected again and flagged as AI by the detector. The client therefore failed to pay the writer's invoice, locking both parties into a difficult situation that would potentially involve legal action and the additional challenge of somehow proving in law that the copy either was or wasn't written by a language model.

I've seen plenty of angry posts on social media from fellow copywriters who have been wrongly accused by clients of using AI to write copy. Some clients even refuse to pay invoices until the writer has completely re-written the entire piece and recorded/filmed their desktop as proof. It sounds extreme, but a key concern of mine is that clients could start 'AI-washing' as a loophole to avoid paying for services. By baselessly claiming “it looks like AI”, cynical clients now have a perfect excuse to devalue our craft and stall on payments. Any client using this technique will find that any professional ongoing relationship will well and truly sour with this approach. No copywriter is going to work for a client that doesn't trust them.

We still need human writers

When AI came along, many companies rejoiced at the amount of money they'd save. They'd no longer need a human copywriter. For some though, this joy was short-lived when they saw the output quality. Sentences that were without any soul or thought were being churned out robotically, not to mention being factually incorrect or biased. This is where AI falls short, and we still need human copywriters.

Content created by AI language models may sound polished and professional, but it doesn't have depth, flair or originality. This is because AI learns by patterns and it also picks up phrasing from vast volumes of data. Using words like 'elevate' 'align' and 'amplify' lack warmth and sophistication, and aren't common words to come out of a human mouth unless you're in a highly corporate environment. The structure of an AI's output also feels bland and repetitively unnatural.

Copywriting isn't actually about making up clever phrasing or turning out content for the sake of it to boost rankings. It's the science of selling a product or service through human language. It helps people (customers), to connect with a brand. To do their job properly, copywriters need data, empathy, and a good understanding of human psychology and decision-making.

Copywriting isn't just about writing to persuade – it's also about creating connections with people, and helping them to solve their problems. It understands an audience and speaks directly to them. It shares brand stories, and makes products and services feel relatable. It isn't just show and tell – human copywriting really understands the nuance and tone of connection. AI can only mimic this – it can't evolve a tone based on a brand's transformation, or evoke the emotion that makes words dance on paper. AI doesn't have personality, empathy or emotional intelligence. These are all things we need as humans when we communicate. That's what makes great copy, and that's why there will always be a market for people who need quality, authentic copywriting.